Stanford Graded AI. It's Brilliant, Broken, and Coming for Payroll!

Every year, Stanford University publishes a massive independent review of where AI actually stands, no sales pitch, no fundraising narrative. Just data. The 2026 edition landed this week. It's over 400 pages long.

30-Second Summary:

1. AI adoption is faster than the internet was. More than half the world already uses it.

2. Demos lie. AI scores near-perfect on tests, but real-world benchmarks barely exist.

3. AI companies are getting less transparent about how their technology works.

4. AI agents are starting to spend money. Visa just built the payment rails for it.

5. 65% of workers say AI helps. But only 27% say their company has actually changed.

Five Findings That Matter:

People adopted AI faster than they adopted the internet.

Think about how long it took the internet to become something everyone used. Now consider this: AI hit 53% global adoption in just three years. The internet didn't reach that point for over a decade. And it's not just individuals — 88% of companies are using AI in some form, and the value these tools deliver to American consumers alone is estimated at $172 billion a year.

Why this matters to you:

The "should we use AI?" conversation is over. Your competitors have already decided yes. The new question is: are you using AI to speed up existing work, or to fundamentally change how your business operates? The companies that treat AI like a faster spreadsheet will be outrun by the ones that treat it as a new way to build a team.

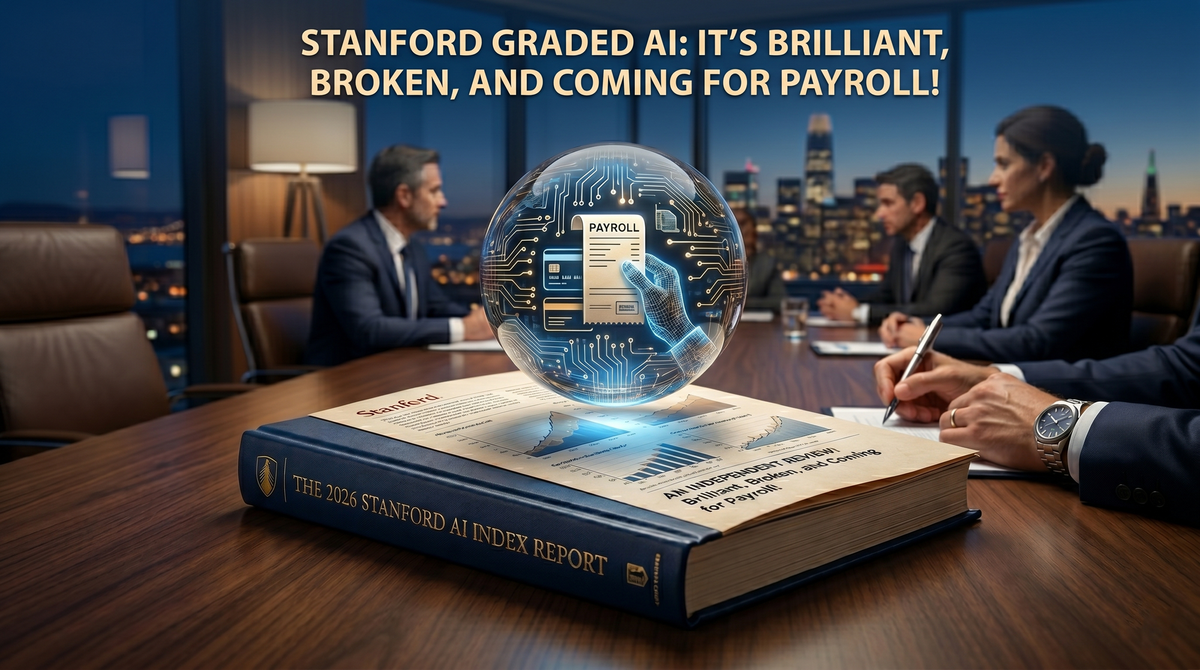

AI aces every test. But tests aren't your business.

On a major coding benchmark, AI performance jumped from 60% to near-perfect in one year. On the hardest exam researchers could design questions from PhDs across every field — the best AI went from getting 9% right to over 50%. Sounds incredible. But here's the catch Stanford's Report flagged: for real-world tasks like running a sales process or managing customer operations, reliable benchmarks barely exist. What AI can do in a controlled test and what it does inside your business are two very different things.

Why this matters to you:

If someone shows you an AI demo and it looks flawless, that's the test score, not the report card. Ask how it performs with messy data, unclear instructions, and a process that nobody documented. That's the real benchmark. The companies getting value from AI aren't the ones buying the highest-scoring model they're the ones that designed their operations to work with AI from the start.

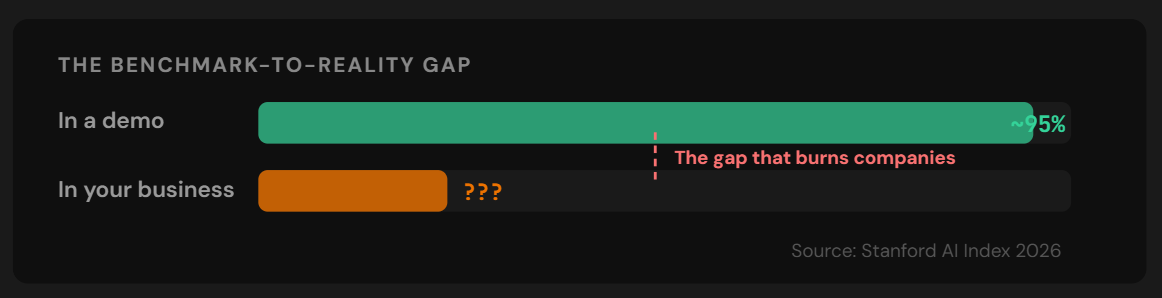

The companies building AI are telling you less about how it works.

Stanford tracks how open AI companies are about their technology. This year, transparency scores dropped from 58 to 40 out of 100. The biggest players: Google, Anthropic, OpenAI have all stopped sharing basic details like how much data their models trained on. 80 out of 95 major AI models released last year shipped without their training code. At the same time, these same companies tripled their presence in government hearings, while academic and independent voices declined.

Why this matters to you:

Think of it this way: you wouldn't hire a senior employee who refused to explain their process. But that's exactly what many businesses are doing with AI. When the most powerful systems are also the least transparent, every leader needs to ask: can I explain to my board, my customers, and my team what our AI is doing and why? If the answer is no, that's not a technology problem, it's a business risk.

The U.S. lead in AI is shrinking fast. That affects your vendor decisions.

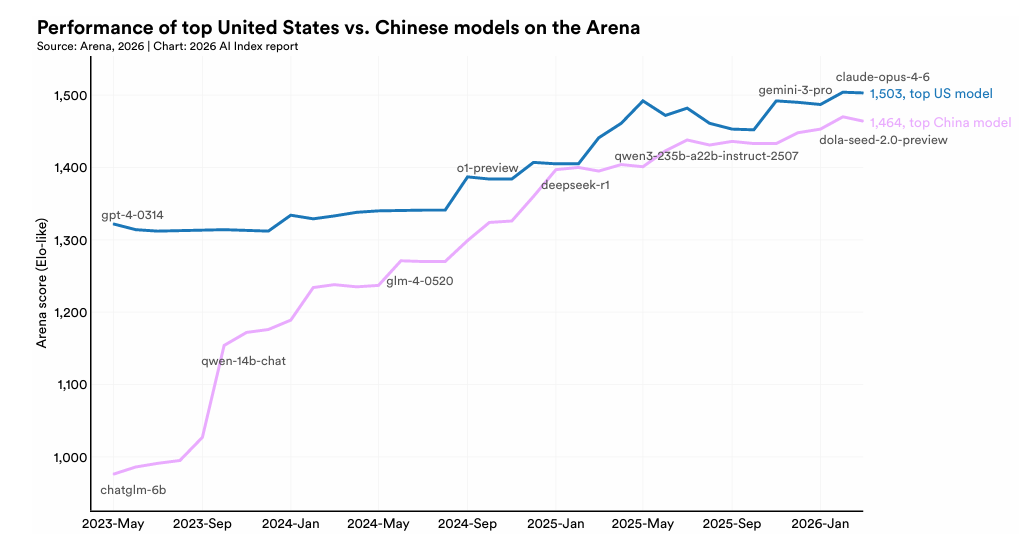

A year ago, American AI companies had a comfortable lead in model quality. Today, the gap between the top U.S. and Chinese AI models is just 2.7 percentage points. China now leads the world in AI research publications, patents, and industrial robot installations. The U.S. still leads in investment ($285.9 billion vs. $12.4 billion in China), but the talent pipeline is drying up, the number of AI researchers moving to the U.S. has dropped 89% since 2017.

Why this matters to you:

If your entire business runs on AI from one provider, in one ecosystem, you're carrying concentration risk you probably haven't priced in. Smart leaders are starting to ask: what happens if our primary AI vendor changes pricing, access, or capabilities overnight? Having a backup plan isn't paranoia it's basic operational hygiene.

Your customers and employees are nervous about AI.

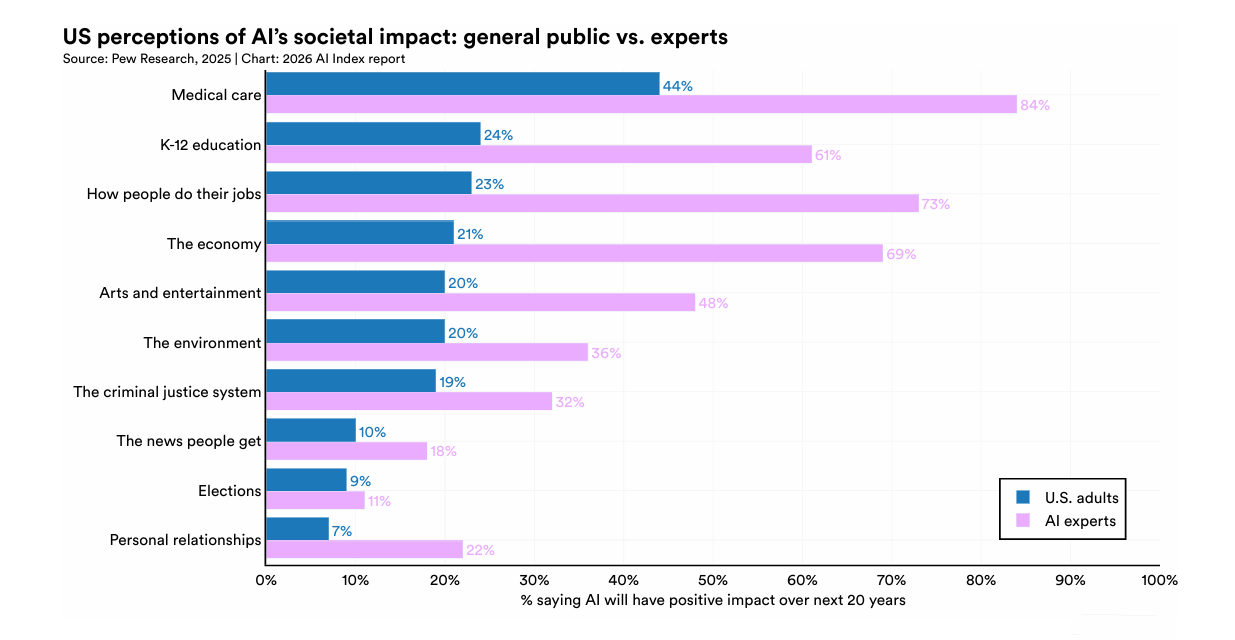

Here's a number that should make every business leader pause: only 10% of Americans say they're more excited than concerned about AI. Compare that to 56% of AI industry experts who are optimistic. In healthcare, 84% of experts see AI as positive, but only 44% of regular people agree. And the U.S. ranked dead last among all countries surveyed in trusting its government to regulate AI, at just 31%.

Why this matters to you:

In a market where everyone's anxious, the companies that win trust will be the ones that can explain their AI clearly: what it does, what it doesn't do, and what happens when it's wrong. Clarity isn't a marketing advantage. It's the entire advantage.

The Deep Dive:

AI Agents Just Got Their Own Wallets. Here's What That Means for Your Business.

While the Stanford report grabbed headlines this week, the most important development in AI is happening in a place most business leaders aren't watching: the payments layer.

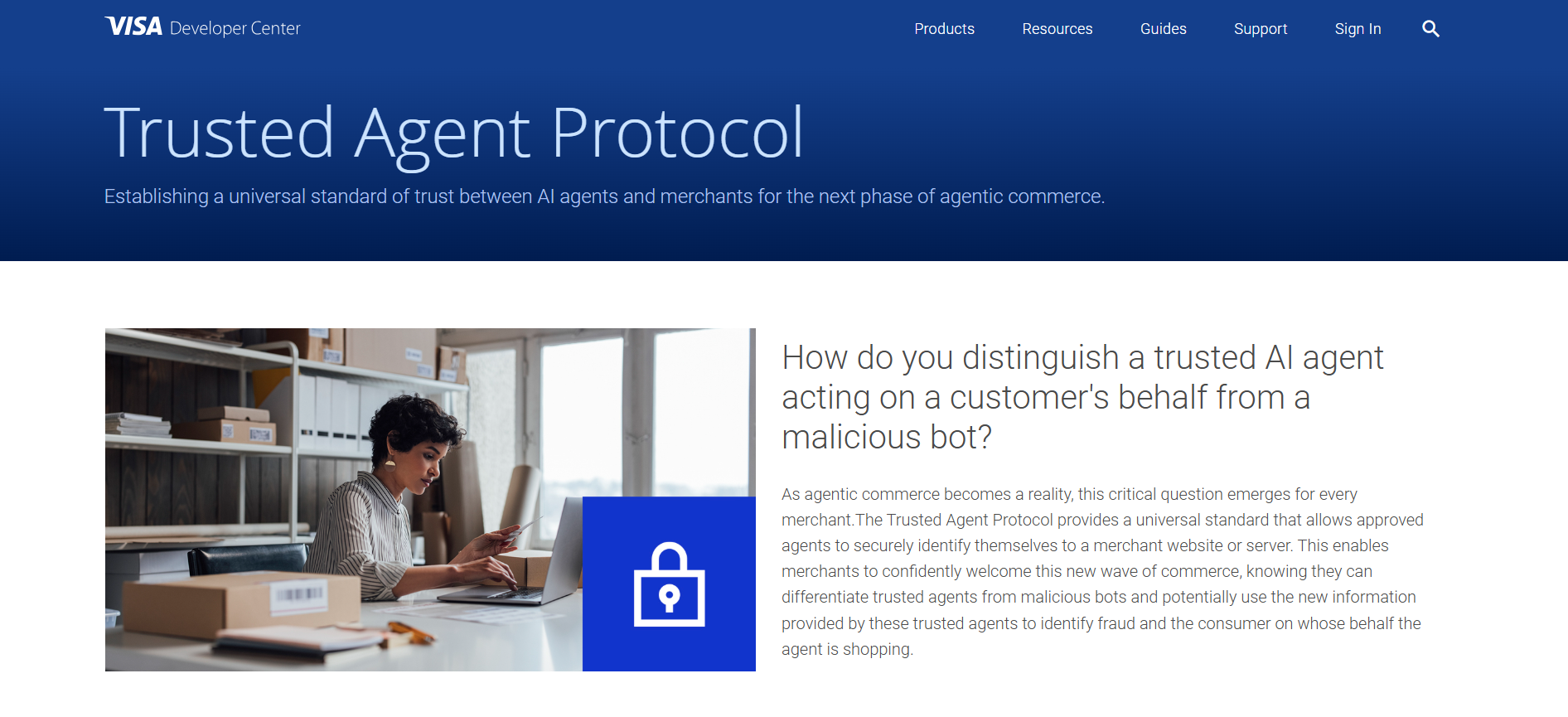

Visa — the company that processes roughly half of all card transactions on Earth has built the infrastructure for AI agents to spend money. Not humans clicking "buy." Software acting on its own, within rules a business or consumer sets in advance.

Visa has already completed hundreds of real-world agent-initiated transactions with over 100 partners. More than 20 AI agents are plugged directly into their system. And Visa's own forecast: millions of people will have AI agents making purchases on their behalf by the end of this year.

Here's why that matters even if you're not in payments:

- AI is getting IDs and credentials — like a real employee.

Visa created something called the "Trusted Agent Protocol." Cloudflare built "Agent Name Service." These systems give AI agents verified identities, like a passport for software. When an AI agent has credentials that a bank or a vendor can verify, it stops being a tool inside your workflow. It becomes a participant in a transaction. Your AI doesn't just help you write an email, it can negotiate a deal, purchase supplies, and renew a contract. On its own.

If that sounds familiar, it's how we built AI Xccelerate's six revenue employees. Names, roles, accountability. The infrastructure is finally catching up to the idea.

- The sales process is about to happen between two AIs.

Here's the part most people miss. When an AI agent shops for a business: comparing vendors, evaluating pricing, making a decision, it doesn't browse your website the way a human does. It reads data. If your company's offerings aren't structured in a way an AI agent can evaluate, you won't even make the shortlist. The buyer's AI and the seller's AI will increasingly negotiate in the background. Your website's "About" page won't matter. Your data will.

- Nobody's answered the accountability question yet.

If your AI agent picks the wrong vendor, overpays on a contract, or negotiates terms that hurt your business, who's responsible? This isn't hypothetical. The EU already classifies autonomous financial decisions by AI as "high-risk," requiring full logging and explainability. The rules are being written right now. The companies building governance into their AI operations today won't need to scramble when the regulations arrive.

The companies still thinking about AI as a productivity tool are about to be outpaced by companies thinking about AI as an economic participant one that transacts, negotiates, and earns.

You don't need to be in fintech to feel this. If you sell to businesses, your future buyers may send an AI agent before they ever send a human. The question is whether your business is legible to someone else's AI.

The Number That Matters This Week:

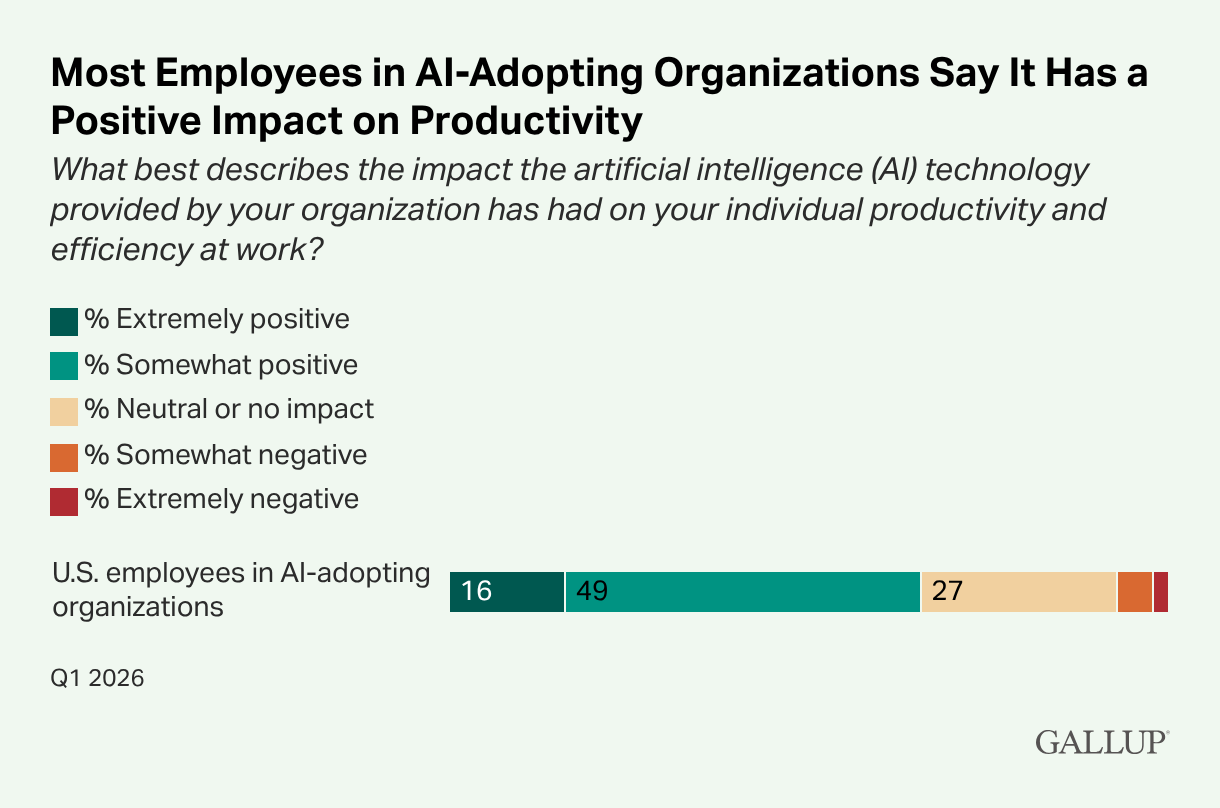

65%of employees say AI has improved their productivity. That's from a Gallup survey of 23,717 American workers released this week. Sounds great until you see the next number:

Only 27% say their workplace has actually changed in meaningful ways.

Translation: at most companies, AI is making individual tasks faster: better emails, quicker reports, smoother scheduling. But the org chart, the team structure, the way the business actually runs? Untouched.

That's the gap. The difference between a company that uses AI and a company that's built around it. The first kind saves an hour a week. The second kind saves a headcount line on the P&L.

Take This to Your Next Leadership Meeting

If AI agents can now spend money, negotiate deals, and talk to your customers' AI who on your team is responsible for managing that?

If that question landed, we should talk. Not a demo. A conversation about what AI ownership actually looks like when it's built into the team, not bolted on top.

→ Book 15 minutes: book.aixccelerate.com